![[un]prompted - The AI Practitioner Conference](unprompted-logo.png)

GenAI Endpoint Observability for Detection Engineers

Mika Ayenson, Ph.D. · Detection Engineering @ Elastic

$ whoami_

Mika Ayenson, Ph.D.

TRaDE Team Lead @ Elastic

▸Leads Threat Research & Detection Engineering (TRaDE)

▸10+ years in security research & cyber experimentation

▸Automates everything, family-motivated efficiency nerd

New GenAI tools, agents, and frameworks ship daily. Detection engineering can't keep up with enumeration.

190+ tools across 14 categories — and this was last week's count.

Source: Morph AI Coding Agent Dev Tools map · LLMDevs community

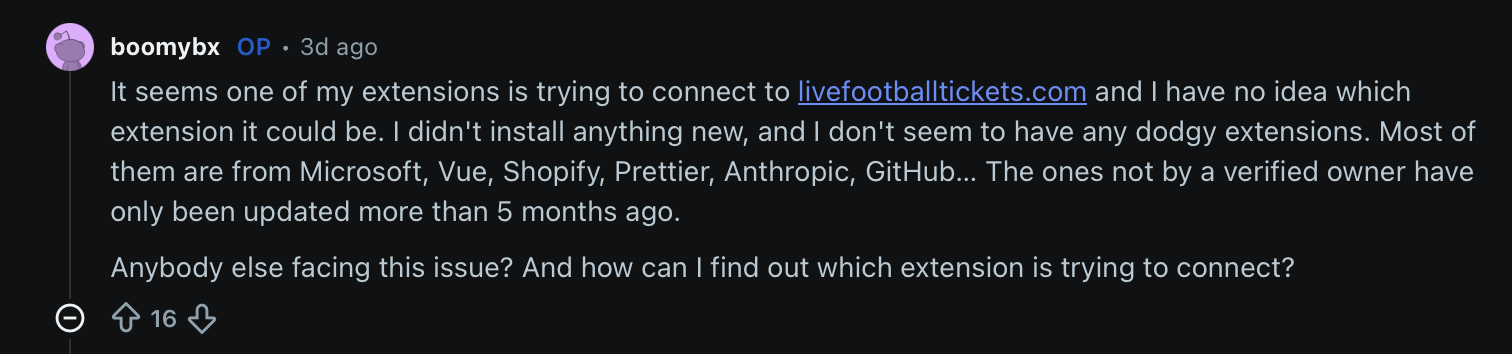

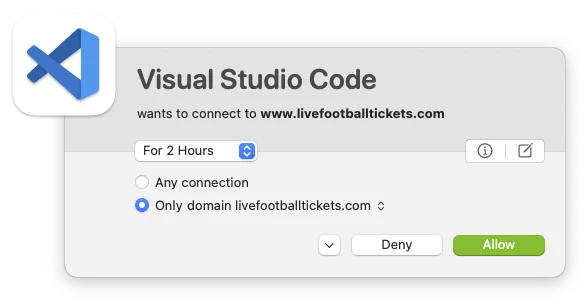

REAL WORLD · r/vscode · 2026

A VS Code user discovers one of their extensions is silently connecting to livefootballtickets.com. Extensions from Microsoft, Anthropic, GitHub — all trusted publishers. No new installs. No idea which one.

The detection gap

Users install tools faster than they can audit them. Extensions run with ambient authority. When something goes wrong — they can't even identify the source.

These tools spawn shells, write files, and make network calls. And your EDR has no idea an AI is driving.

JetBrains Dev Ecosystem 2025 · DX AI-Assisted Engineering Q4 2025 · ACTI Agentic Coding Survey Jan 2026

Source: Events.dns.question.name · Metric: unique host.id · DNS/network is the fastest path to visibility

Source: process.code_signature.exists + process.code_signature.status · Metric: unique host.id

Only 2 out of 131 hosts ran unsigned binaries

Nearly every process connecting to LLM APIs is signed and trusted. An unsigned binary reaching an LLM endpoint is an immediate anomaly worth investigating.

process.code_signature.exists == false connecting to known LLM domains.

Mostly signed, mostly legitimate. The unsigned ones are buried in the noise — and that's the problem.

AVG COMMAND LINE LENGTH

48K

chars · zsh via claude · macOS

Top by OS:

macOS zsh→claude: 48K

Linux bash→cursor: 3K

Windows pwsh→codex: 2.3K

⚠ Caveat

Long cmdlines = indicator, but LLMs generate multi-pipe chains that defeat entropy-based detection

What GenAI tools and their descendants write to disk, by unique host.id

Autonomous file writes

GenAI tools write code, configs, temp files, and data to disk without explicit user action. The subset targeting .json, .yaml, .md configs is a persistence surface.

Notable patterns

omni (Cursor) writes .md across 9 hostscodex writes .py files directlyclaude creates .tmp staging filescopilot-language-server writes to .dbcredentials.db via Python15 hostscredentials via claude4 hostsCookies via claude2 hostslogins.json via claude.exe1 hostazureProfile.json via jq4 hostswebhook.site · zsh via claudeapi.telegram.org · bash via nodepolymarket.com · 30 events via claudehackerone.com · zsh via claude35.178.x, 52.56.x, 162.243.xTerminal.plist6 hostsTerminal.plist6 hostswatchman.plist1 hostAutoMuteGames.lnk1 hosthcaptcha.comsupabase.cointercommicrosoft365.mcp.claude.comgrafana-mcp.osend.ioYour alert fired. A suspicious process ran. Good luck figuring out if a human or an LLM did it.

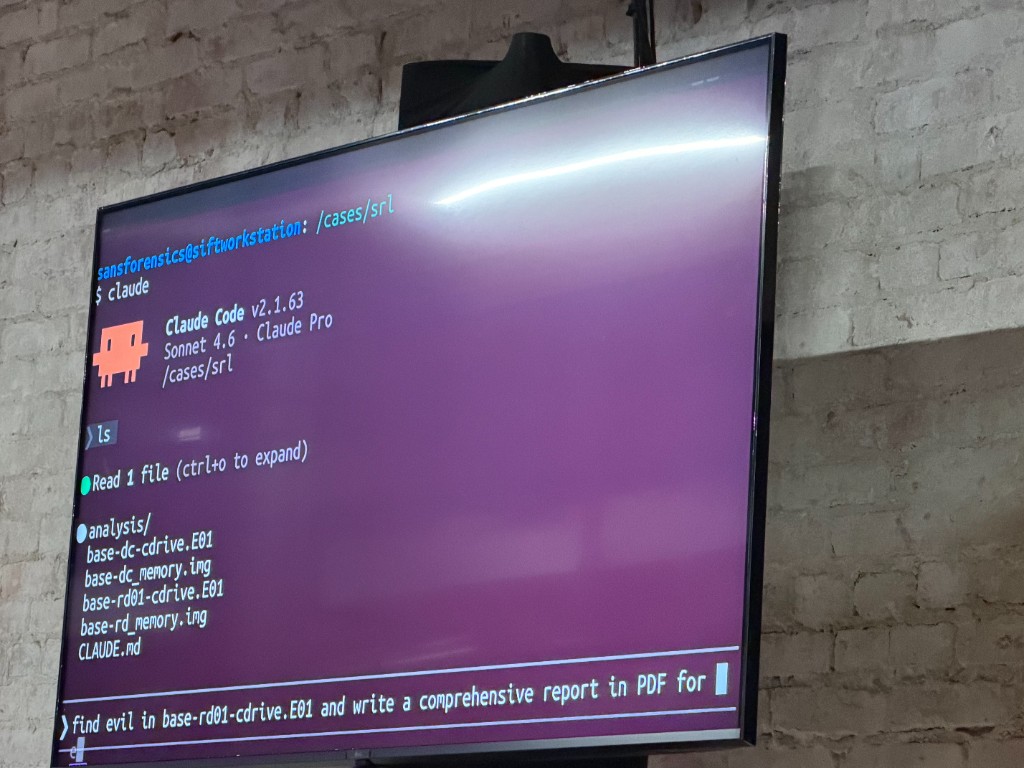

From the presentation right before this one.

"Find evil in base-rd01-cdrive.E01 and write a comprehensive report..."

Same PID, same user, same command line. Nearly identical telemetry. Looking at raw events, it's extremely difficult to tell them apart.

OWASP MCP Top 10 (2025) · VirusTotal: From Automation to Infection (Feb 2026)

A poisoned repo file tricks the AI agent into exfiltrating data — all through legitimate-looking process chains.

The entire chain looks like a developer running curl. Without prompt-level telemetry, the poisoned context is invisible.

What does endpoint telemetry actually give us when AI tools run commands?

▸Process spawns with ancestry chains

▸File events: creates, writes, deletes

▸Network / DNS: cheapest, most actionable

▸User context: uid, session, root = suspicious

▸Runtime scrutiny: node, npm, deno spawns

✗The prompt that triggered it

✗Model reasoning for the command

✗Shell builtins: no child process

✗MCP tool identity: which server?

✗Human vs. AI intent boundary

No field distinguishes "Cursor Agent spawned this" from "user typed it." process.parent.name is all there is. How would you enrich that?

AI agents can self-escalate from sandboxed to unsandboxed execution. How would you even detect that privilege transition?

AI tools operate session-based. New session, clean slate. If an attacker starts fresh, your forensic chain breaks. How do you correlate across sessions?

MCP servers spawn processes through node or python3. The server identity isn't in process metadata. How do you attribute the tool?

Process telemetry gives you more than you'd think. Here's where the signal is.

parent.name Isn't EnoughSource: production Elastic Defend rules

-c*mcp* in parent cmdline| Tactic | Rule | Type |

|---|---|---|

| Execution | GenAI/MCP Child Process Execution | BBR · EQL |

| Execution | Suspicious Activity via GenAI Descendant | ES|QL + EQL |

| C2 | GenAI Connection to Unusual Domain | new_terms |

| Credential Access | Sensitive File Access + auto kill_process | EQL sequence |

| Persistence | LaunchAgents / rc.local / Startup modification | EQL sequence |

| Defense Evasion | Unusual Process Modifying GenAI Config | new_terms |

Cross-platform: macOS, Linux, Windows · Elastic Defend rules include automated prevention responses

Real-time

EQL descendant of · maxspan=1m

Auto-response: kill_process

Batch / Historical

ES|QL INLINE STATS + MV_INTERSECTION

Hours of history · surface anomalies

Use both. Elastic Defend kills in real-time. SIEM detection rules find what slipped past.

Simplest starting point: Flag known LLM process names (cursor, claude, copilot) via process metadata and mark every subprocess as AI-descended.

Today's best option is process.parent.name and command_line heuristics. That's not good enough.

Every production rule today is a workaround for telemetry that should exist but doesn't.

gen_ai.content.prompt needs redaction before SIEMMore telemetry is good. But it introduces new compliance and forensic challenges that need to be solved simultaneously.

All major AI coding tools now expose lifecycle hooks: shell commands that fire at key agent events. These are a direct telemetry pipeline for what EDR can't see.

PreToolUse · PostToolUseSessionStart / EndUserPromptSubmitPermissionRequestSubagentStart / Stop

beforeShellExecutionafterFileEditbeforeMCPExecutionsessionStart / EndafterAgentResponse

preToolUse · postToolUsesessionStart / EnduserPromptSubmittederrorOccurredEDR sees processes. APM sees intent. gen_ai.* semantic conventions connect them.

gen_ai.client.token.usage: token consumptiongen_ai.client.operation.duration: latencygen_ai.server.time_to_first_tokengen_ai.client.inference.operation.detailsgen_ai.evaluation.result: guardrailsparams._meta propagates trace IDs across toolsgen_ai conventions v1.37+gen_ai.* field mappingThe Vision

Hook captures PreToolUse → OTel gen_ai.client.inference → enriches endpoint alert with AI attribution → detection rule correlates both

Claude Code already ships OTel natively: 8 metrics + 5 event types via claude_code.* namespace. The gen_ai.* spec provides a standard. Most teams aren't ingesting either yet.

gen_ai.* Specgen_ai.agent.id

gen_ai.operation.name

gen_ai.provider.name

MCP via params._meta

Industry standard, not yet widely adopted

claude_code.*claude_code.token.usage

claude_code.cost.usage

claude_code.session.count

+ tool_result, api_request events

Own namespace, ships today

Spawn correlation ID

Guardrail decision context

Cursor, Copilot: no OTel

Gap is narrowing

Guardrail blocks, tool-use decisions, refusal events: these are security signals. Most are trapped in vendor dashboards.

Claude Code is the exception. It exports OTel metrics and events natively: session duration, token usage, tool results, API errors. Proof providers can do it.

Before writing rules, reduce what you need to detect.

ps aux | grep -E "cursor|claude|copilot"node, npm, deno execution as GenAI descendantsA maturity progression. Start where you are, build toward full observability.

TODAY: use existing telemetry

NEXT: active detection & policy

gen_ai.* semantic conventionsLONG TERM: OTel, hooks, APM

All rules shown today are open source. Click to open on GitHub.

SIEM DETECTION RULES

ES|QL & EQL rules for GenAI process detection, DNS telemetry, ancestry chain analysis

ENDPOINT BEHAVIOR RULES

Elastic Defend behavioral rules with real-time kill_process prevention responses

github.com/elastic/detection-rules

Search for domain: llm in the repo

All SIEM rules shown today are open source